Less Tech Noise. More Strategic Insights

Join 5000+ Tech leaders & CISOs receiving our deep-dive insights.

AI Governance for Canadian Organizations: A Risk-Based Framework for Regulated Industries

| Your AI Pilot Worked. The Rollout Did Not.

The test phase of the AI project was successful, but when the rollout to larger numbers began, additional data management problems surfaced, stemming from scattered data throughout different corporate sets of data, no single entity owning compliance-related inquiries, legal issues arising regarding missing documentation, and non-cohesive efforts to build AI throughout the company.

None of these were model problems. They were governance problems.

There are many things inhibiting progress beyond the model, and one of the biggest restrictions to successful AI implementation encompasses the lack of organized structures in connection with AI. In Canada, the current gap is becoming wider, due to an evolving regulatory framework and rising customer expectations across industries.

At Infopsrint Technologies, we focus on how Canadian enterprises are establishing AI governance in the current context through risk classification, proper alignment with compliance policies, and establishing cutover decisions.

This guide covers how to build a governance framework that holds up in production — not just on paper.

Why AI Governance Has Become Urgent for Canadian Enterprises in 2026

Canada’s Bill C-27, the Artificial Intelligence and Data Act, did not survive the 2025 federal election. With growing focus on how organizations operate across Canada’s AI and digital transformation landscape, the AI strategy for 2025–2027 is now being shaped, with a national AI strategy task force consulting widely and a renewed strategy expected in 2026.

That legislative gap does not mean a governance gap is safe. It means the opposite.

Federal privacy law under PIPEDA still applies to how personal data moves through AI systems. Provincial regulations like Quebec Law 25 imposes specific requirements around automated decision transparency. The federal Directive on Automated Decision-Making signals where regulatory thinking is heading for public-sector organizations and the private-sector organizations that serve them.

This is not a reason to wait. It is a reason to move strategically now.

The EU AI Act is already in effect for organizations with exposure to the EU. Canada’s regulators have made clear that transparency, accountability, and risk-based oversight are the direction of travel.

An organization working in regulated industries that deploys AI without governance in these environments creates legal and reputational exposure that outweighs any AI efficiency gains.

What AI Governance Actually Means

There is a version of “AI governance” that lives in policy documents and ethics statements and never quite reaches the systems your teams are actually deploying, including AI automation solutions already in use across the organization.

Effective governance has four operational components:

- Inventory and classification:

You cannot govern what you cannot see. Most large Canadian organizations have more AI systems in production than their governance teams know about, including third-party tools embedded in SaaS products that your teams adopted without formal IT review. The starting point for any AI governance framework is a complete inventory of AI systems, classified by the risk level of the decisions they influence. - Accountability structure:

Every AI system that affects customers, employees, or financial outcomes needs a named owner — someone accountable for its performance, compliance posture, and ongoing monitoring. This has to be established before a system goes into production, not after something goes wrong.

Your governance framework needs to answer three questions in writing:

Who approves deployment?

Who owns performance monitoring?

Who is notified when a model is not performing as expected, and what do they do next?

- Documentation and auditability:

Regulated industries are being asked if they have the ability to explain appropriate AI decisions and audit them. In order to accomplish this, they will be expected to have model cards, data lineage, training documentation, and records of human oversight for all AI systems that impact customers, employees, or financial results.

If your teams cannot produce this documentation on request, you have an auditability gap regardless of how well the underlying models perform. - Integration with existing risk frameworks:

AI governance should not live in a silo alongside your existing enterprise risk management, cybersecurity, and privacy programs. It should extend them. Your AI risk taxonomy should connect to your ERM register. Your AI data policies should align with your PIPEDA compliance program. Your model monitoring should feed into your security operations.

This integration is where most organizations underinvest — and where the most significant governance failures originate.

The Three Pillars of an Effective Canadian Enterprise AI Governance Framework

Building Your Framework: A Practical Implementation Sequence

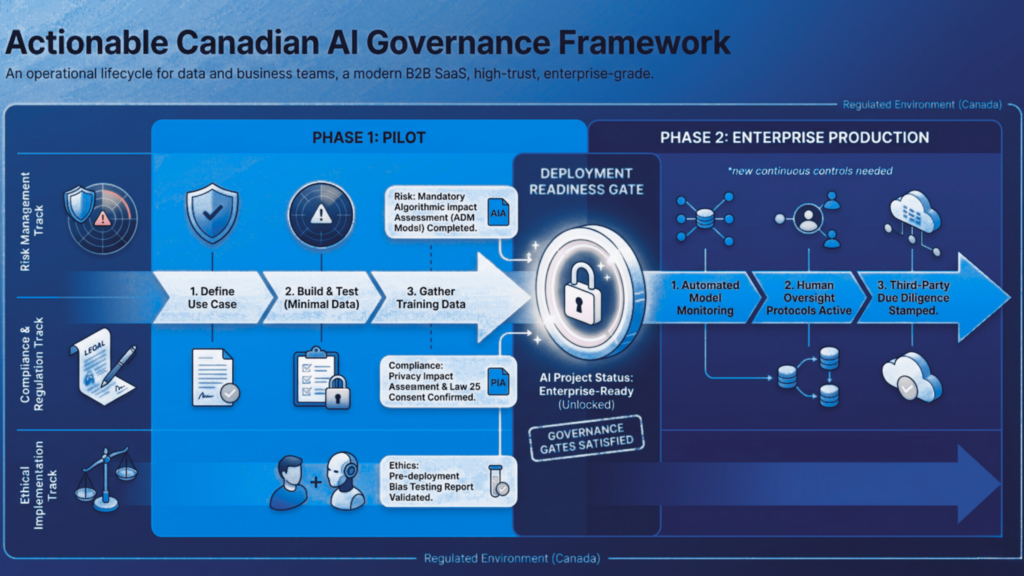

Governance frameworks fail not because of poor design, but because of poor implementation sequencing. These are the phases that consistently produce durable results.

Phase 1: Inventory and risk stratification (Weeks 1–4)

Goal: Know what you have and how risky it is.

Key actions in this phase:

- Review all AI system technology utilized in production, pilot, evaluation stages, and in any third-party tools

- Classify risk likelihood depending on how confidential or important the decision is.

- Create a centralized governance registry for tracking all AI systems

- Assign a temporary owner for each AI system to include an individual who is “accountable” for performance, compliance, and ongoing monitoring

Phase 2: Policy and accountability structure (Weeks 4–8)

Goal: Establish the rules and the people who enforce them.

Key actions in this phase:

- Defining acceptable and prohibited AI use, disclosure requirements, and escalation paths through a formal policy

- Securing review from legal, privacy, and security, with executive-level sign-off

- Establishing a governance body with cross-functional representation

- Enabling the governance body to review high-risk deployments, adjudicate policy exceptions, and track governance posture over time

Phase 3: Process integration (Weeks 8–16)

Goal: Make governance part of how work gets done, not a separate review step.

Core integration requirements:

- Introduce governance gates into the project lifecycle before production deployment

- Apply a governance checklist covering data provenance, consent, security controls, access management, bias testing, human oversight, audit logging, and disclosure documentation

- Integrate AI risk into the enterprise risk register

- Connect model monitoring with security operations and privacy programs

- Incorporate vendor AI assessments into third-party risk management processes

Phase 4: Monitoring and continuous improvement (Ongoing)

Goal: Keep governance active as models, regulations, and use cases evolve

Governance is not static. Models drift. Regulations evolve. Business use cases expand in ways that were not anticipated at deployment.

Ongoing governance requires:

- Automated performance tracking and monitoring

- Periodic bias audits and annual governance reviews

- Mechanisms for employees and customers to raise concerns about AI-influenced decisions

- A defined reporting cadence to communicate AI governance posture to the board and leadership

- Clear visibility into AI risk exposure for executive decision-making

The Most Common AI Governance Failures: 5 Patterns to Avoid

A pillar framework gives your leadership team shared language and a directional map. But governance is built in the details in the specific decisions your data scientists, privacy officers, security leads, and risk managers make every day. These five areas are where the details matter most for Canadian enterprises in regulated industries.

- Governance that only covers models, not systems:

A model is one component of an AI system. The data pipeline, the user interface, the decision output, the human review process, and the vendor contracts all of these are part of the system and must be within the scope of your governance framework. - AI Risk Assessment Models:

The methodologies, scoring frameworks, and algorithmic impact assessment approaches used to classify AI systems by risk level and how to apply them at a business scale across a mixed portfolio of custom-built and vendor AI systems. Includes a practical comparison of qualitative and quantitative assessment models for high-impact regulated use cases. - Data Privacy in AI Systems:

How PIPEDA, Quebec’s Law 25, and sector-specific data regulations apply to AI data pipelines, including training data consent, data minimization controls, data residency requirements, and what privacy-by-design architecture looks like in practice for Canadian enterprises deploying AI in regulated contexts. - Model Bias & Ethical AI Controls:

The technical and operational mechanics of bias detection, fairness metric selection, adversarial testing, and remediation workflows written for the cross-functional teams that have to make these decisions together. - AI Audit & Monitoring Framework:

What to monitor, how to detect model drift and output anomalies, how to structure human review workflows, and how to build the incident response and board reporting cadence that keeps your governance program active, not theoretical. Essential reading before Phase 4 of your implementation.

The Governance Advantage

In Canada, the direction is already clear. PIPEDA reform is coming, Quebec Law 25 is already in force, and national AI policy will continue tightening expectations around transparency and oversight. Waiting for clarity is not a strategy—it’s how governance gaps turn into operational and legal problems.

For organizations in regulated industries, the question is no longer whether governance is needed. It’s about whether your current approach can hold up under pressure as systems scale and decisions start carrying real consequences.

If you are not certain your current approach can hold up under scrutiny, it’s a risk.

Get a direct assessment of your AI governance readiness

We’ll help you identify gaps, prioritize fixes, and build a framework that holds up in production, not just on paper. Contact us to start the conversation.

Frequently Asked Questions

Start with a complete inventory of all AI systems in production and classify each by risk level. Assign named accountability for every system, then build governance gates your teams must clear before any AI moves from pilot to production. Integrate AI risk into your existing risk management program, align data practices with PIPEDA, and establish an oversight body with cross-functional representation.

A PIPEDA-compliant AI framework governs how personal data enters, moves through, and exits AI systems. It requires documented consent for training data, data minimization controls, transparency when AI materially affects individuals, and defined data residency practices.

Effective AI risk management in Canadian companies requires treating AI risk as an extension of existing enterprise risk, not as a separate program. This means adding an AI risk taxonomy to your ERM register, applying model risk management principles to high-impact systems, and including third-party AI tools in vendor risk assessments.

A common mistake is rushing to launch a pilot by skipping essential governance steps, which can lead to technical debt later. Before deploying any AI system, make sure to check off key items: data access agreements, security measures, bias testing, human oversight, audit logging, and disclosure documentation. This helps ensure a more reliable and trustworthy system.

Canada lacked a federal AI law after Bill C-27 lapsed. The voluntary AI code of conduct, which outlines fairness, transparency, accountability, and human oversight, is serving as the ethical baseline for private-sector organizations. Adopting these standards now positions your organization well for anticipated formal AI legislation before the current federal mandate ends.